A viral video shows a Tesla Model 3 on “Full Self-Driving” driving straight through railroad crossing barriers in the Los Angeles area, failing to detect the crossing gate entirely.

The incident comes on the same day as NHTSA’s deadline for Tesla to turn over critical data from its investigation into FSD traffic violations — an investigation that specifically includes railroad crossing failures.

What the video shows

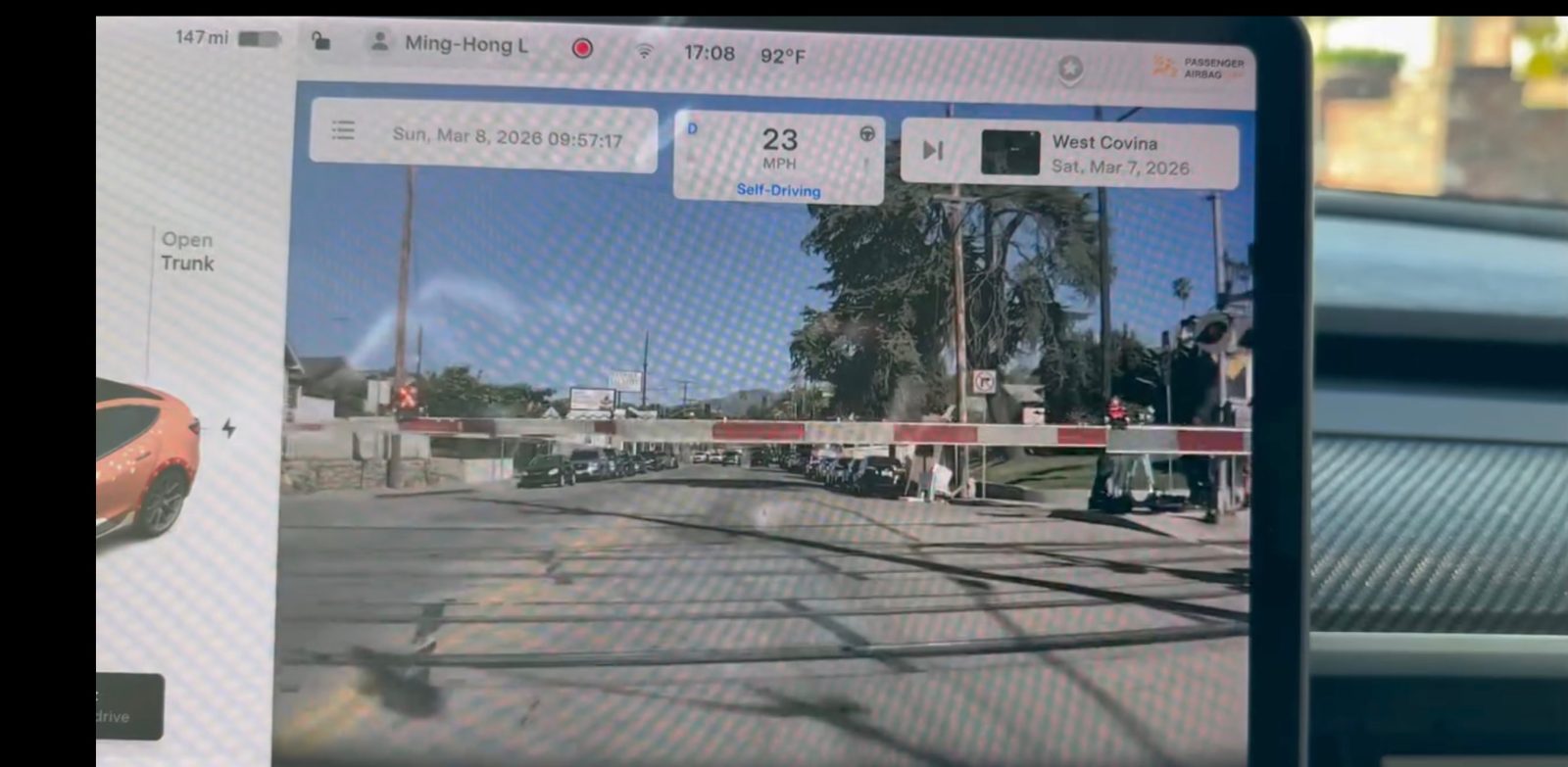

A Threads user named Laushi Liu posted dashcam footage from his Tesla Model 3 on Sunday, March 8, showing the vehicle on “Full Self-Driving” mode at 23 mph near West Covina, California. In the video, the car approaches a railroad crossing where barriers have just come down — and drives straight through them.

The crossing barriers were roughly at the height of Tesla’s front-facing cameras, which makes the failure particularly concerning. The system showed no signs of detecting the barriers or attempting to slow down. The driver did not intervene in time, but the dashcam footage indicate he pressed the brakes around the time of impact.

The post, which Liu captioned “Tesla FSD almost killed me today,” has racked up a lot of comments since being posted yesterday. Many commenters pointed out that FSD is a Level 2 system that requires constant driver supervision, while others criticized Tesla for selling a product called ‘Full Self-Driving’ that fails to detect railroad crossing barriers.

We reached out to the owner to confirm which version of FSD was running during the incident and will update when we hear back.

Railroad crossings: a known FSD blind spot

This is far from the first time Tesla’s “Full Self-Driving” has failed at railroad crossings. NBC News investigated the issue extensively and found over 40 reports of FSD mishaps at railroad crossings on social media, interviewing six Tesla drivers who experienced problems, four of whom provided video evidence.

In one documented case, a Tesla Model 3 on FSD was hit by a train in eastern Pennsylvania after the system navigated onto the tracks. The driver and passengers had exited before impact, but the car was struck.

The pattern was serious enough that Senators Ed Markey and Richard Blumenthal wrote to NHTSA urging a formal investigation into FSD’s handling of railroad crossings specifically.

NHTSA investigation deadline is today

The timing of this viral video is striking. Today, March 9, is the deadline for Tesla to deliver critical crash data to NHTSA as part of the agency’s sweeping investigation into FSD traffic violations.

NHTSA launched the investigation in October 2025 after connecting 58 incidents to FSD, including 14 crashes and 23 injuries. By December 2025, the documented violations had grown to 80, drawn from 62 driver complaints, 14 Tesla reports, and 4 media accounts.

The investigation specifically covers railroad crossing failures alongside other violations like running red lights and crossing into opposing traffic lanes. The probe encompasses roughly 2.88 million Tesla vehicles equipped with FSD.

Tesla has struggled to comply with NHTSA’s data requests, securing two deadline extensions, from the original January 19 date to February 23, and then to today, March 9. The company told NHTSA it had 8,313 records requiring manual review and could only process about 300 per day.

The agency is demanding detailed timelines for each incident starting 30 seconds before the initial traffic violation, including which FSD software version was running, whether drivers received warnings, and whether crashes, injuries, or fatalities resulted.

This incident adds to a growing list of viral FSD failures in recent months. In February, a video of FSD trying to drive a Tesla into a lake went viral. In December, a Chinese Tesla owner crashed head-on during a livestream while demonstrating FSD features.

Top comment by derRichi

Of course the fans will stone the driver again. But the video actually shows how irresponsible it is to present this incapable Level-2 system as if it were in any way suitable for letting the car drive itself. More and more major law firms are now focusing on this shared responsibility of the manufacturer.

Meanwhile, Tesla is simultaneously operating a limited unsupervised Robotaxi service in Austin using the same FSD software that is currently under federal investigation for these exact types of violations.

Electrek’s Take

The fact that Tesla’s “Full Self-Driving” still can’t reliably detect railroad crossing barriers is alarming. Railroad crossings are not edge cases, they are life-or-death situations with clear visual signals: flashing lights, lowered gates, and painted road markings. If FSD can’t handle this, it certainly shouldn’t be called Full Self-Driving, supervised or not.

Yes, the driver should have been paying attention and intervened. That’s the deal with a Level 2 system. But that argument cuts both ways. Tesla charged up to $15,000 for FSD and markets it with a name that implies the car drives itself. When the system fails to detect a literal barrier across the road, it’s fair to question whether Tesla’s marketing matches reality, something the California DMV already ruled it doesn’t.

The timing is almost poetic: this video drops the day Tesla is supposed to finally hand NHTSA the data from its FSD violation investigation, after two deadline extensions. We’ll be watching to see whether Tesla actually delivers, and what that data reveals about just how common these railroad crossing failures really are.

FTC: We use income earning auto affiliate links. More.

Comments